-

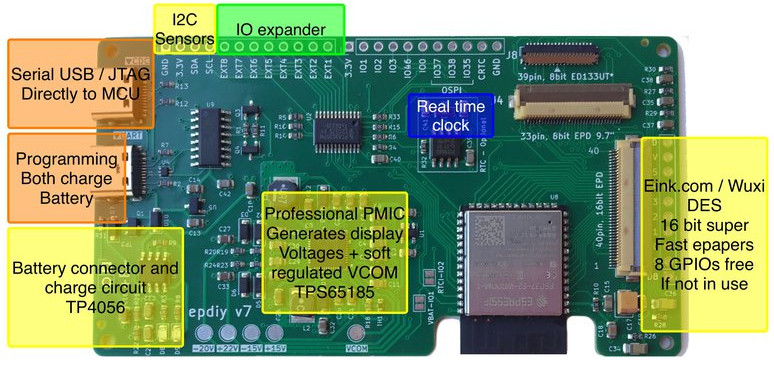

epdiy V7 S3 parallel epaper controller

I wanted to announce that the hardware for epdiy version 7, latest hardware for the project designed by Valentin Ronald, is ready to be tested. The original hardware you can find in the s3_lcd branch, this is my custom modified V7, that exposes 3 connectors: (Now already merged in main branch) I’ve been testing also…

-

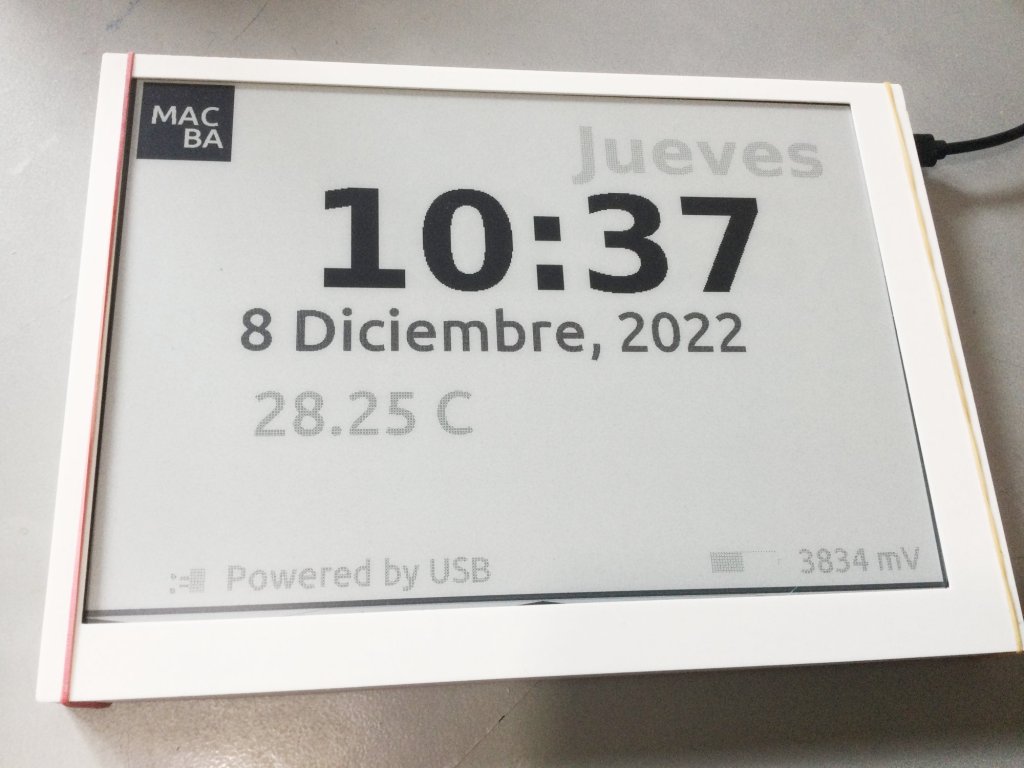

New cases for the 9.7″ parallel displays and proposal for CLB Club

This might be a continuation from the Cinwrite DEXA epaper controller that we designed just after partnering with Freddie from Good-Display.comThe idea is to prepare an open source Firmware that is ready to be used with different examples and two target controllers: 1 – DEXA-C097 from Good-Display needs additionally my Cinwrite ESP32-S3 SPI controller Optionally…

-

Testing touch on small SPI epaper displays

Our now IDF ver. 5 compatible touch component FT6X36 is ready to be tested. There are two affordable models that I would like to cover in this post. First one is the 400×300 FT6336 from Good Display, we can see this one in action (2nd video, 1st had still wrong Waveform) It’s important to know…

-

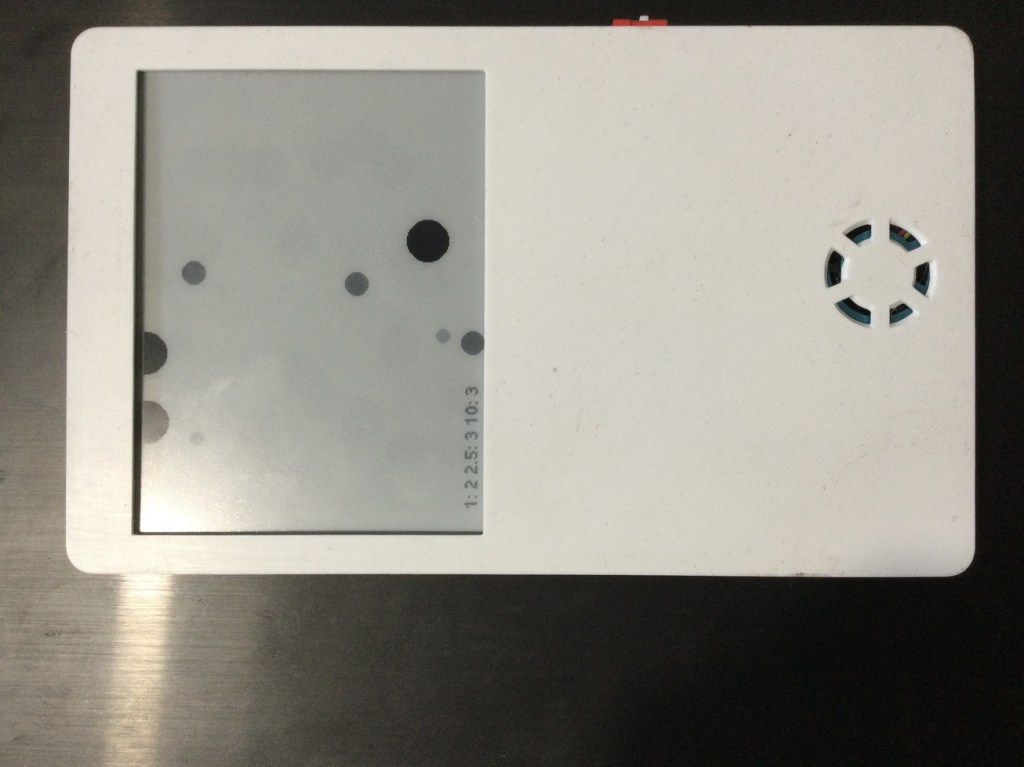

Dust sensor data representation

The idea of using a HM3301 Laser dust sensor was to make a data representation that instead of boring numbers show a more visual appealing image representing particles and their size.For that we though about making a read each 2 minutes and use a bi-stable display with 4 grays.Components used: The build work!First you should…

-

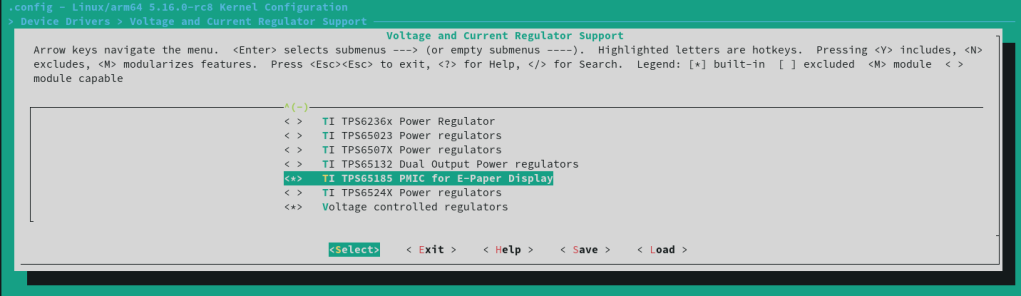

Compiling an ARM Linux kernel with EBC eink interface

First of all the essentials which are clearly explained here: Build your first Linux kernel and make sure you have at least 8 GB free disk space .Please be aware that building your own Linux kernel needs to be done with care and it’s a risky operation where you might need to wipe everything if…

-

Making our own ESP32S3 BLE receive JPEG image Firmware

Since long I’ve been interested in using another means to receive binary data that are more low-consumption oriented than WiFi.And there is a protocol for that, which should be the standard, when sending and receiving BLE files. It’s called L2CAP: Logical Link Control and Adaptation Protocol: L2CAP permits higher-level protocols (such as GATT and SM)…

-

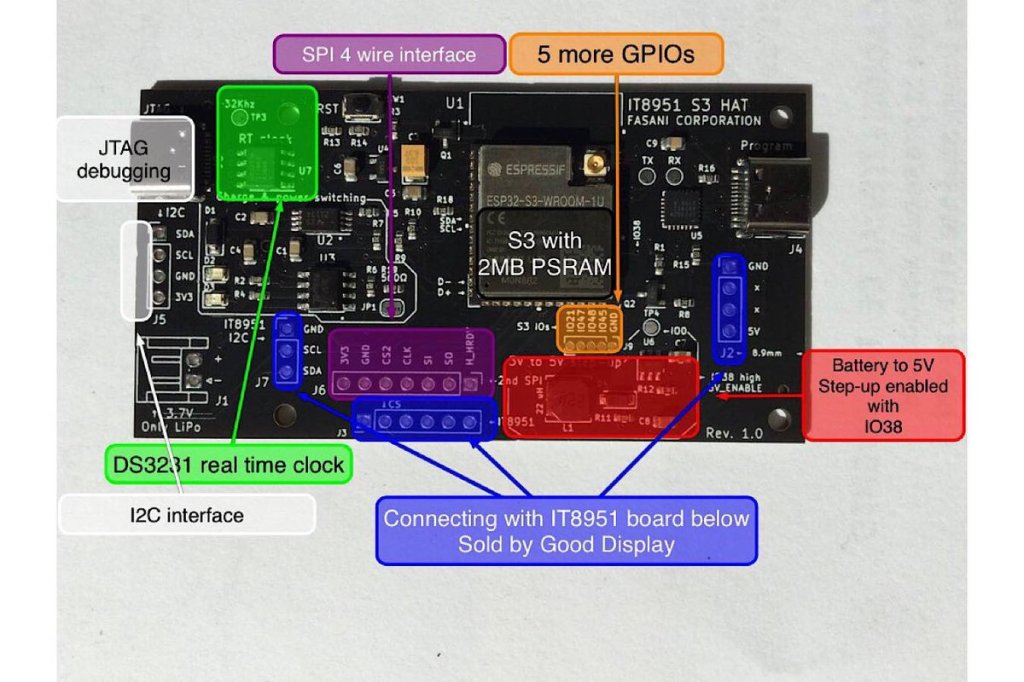

New Cinwrite SPI HAT for IT8951 parallel epaper controllers

At the moment only available in Tindie Fasani Corporation store this PCB mission is to provide: This Cinwrite PCB is open source Hardware that you can explore and even adapt to your needs. The price is 45 USD since I only made 5 and otherwise it will be impossible to cover the costs. But it…

-

Testing Pine64 Quartz model A board

The Quartz64 Model A is powered by a Rockchip RK3566 quad-core ARM Cortex A55 64-Bit Processor with a Mali G-52 GPU. It comes equipped with 2GB, 4GB or 8GB LPDDR4 system memory, and a 128Mb SPI boot flash. There is also an optional eMMC module (up to 128GB) and a microSD slot for booting. So…

-

Moved to Barcelona

Well it was hard, frustrating and a very tiring experience to say the least. But after finally signing a one year Contract for the apartment, and many “Nos” for other’s we’d liked, but we didn’t qualify since we do not have any spanish work…we are settling down nicely and already got high speed internet installed.Now…

-

LVGL to design UX interfaces on epaper

lv_port_esp32-epaper is the latest successful attempt to design UX in C using Espressif ESP32.If you like the idea please hit the ★ in my repository fork.What is LVGL? LVGL stands for “Light and Versatile Graphics Library” and allows you to design an object oriented user interface in supported devices. So far it supports mostly TFT…

-

Subscribe

Subscribed

Already have a WordPress.com account? Log in now.