-

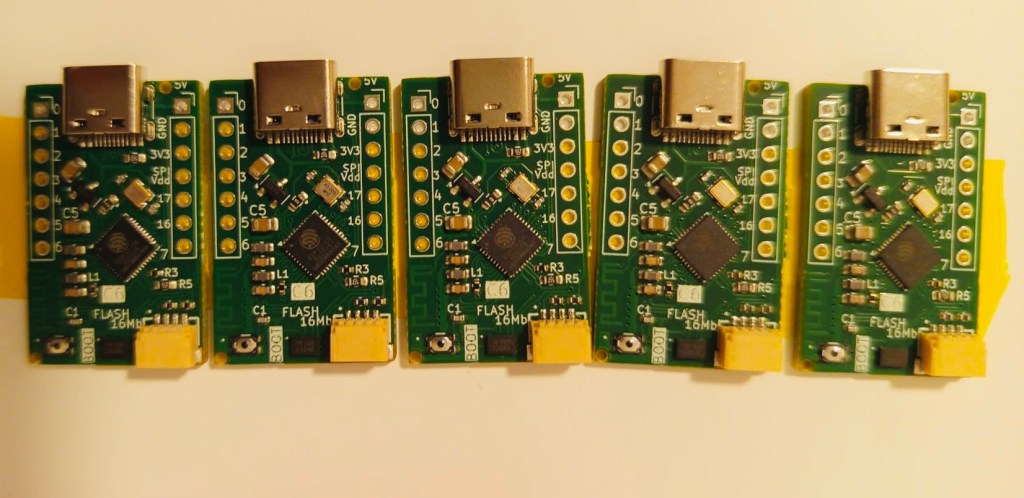

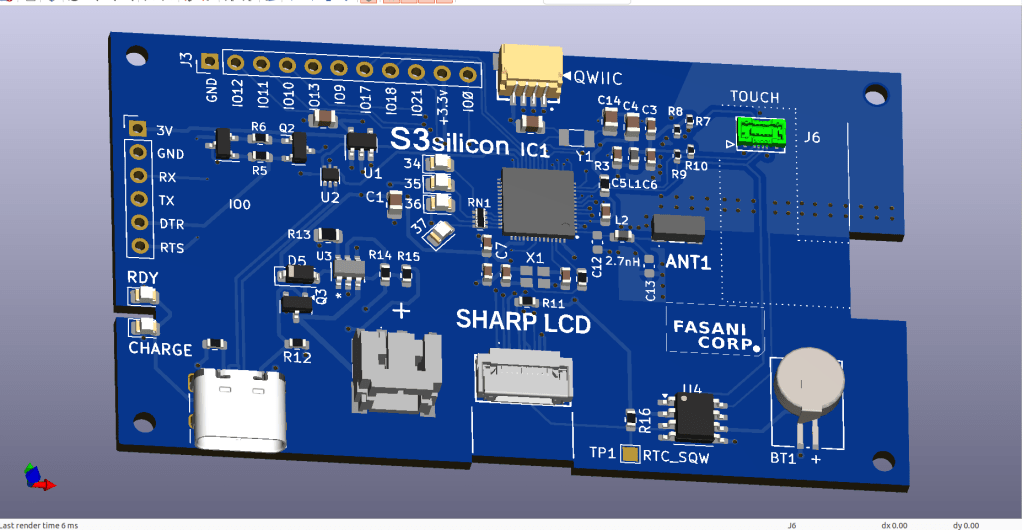

SHARP Controller LCD based on ESP32S3

The evolution of a simple C3 controller board into a full fledged ESP32S3 “Silicon only” small PCB that can control 400×240 LCDs and all their smaller models.First LCD controller I designed was a small clock size 128×128 pixels sharp LCD inspired by my friend Larry Bank who also likes this display technology. The evolution that…

-

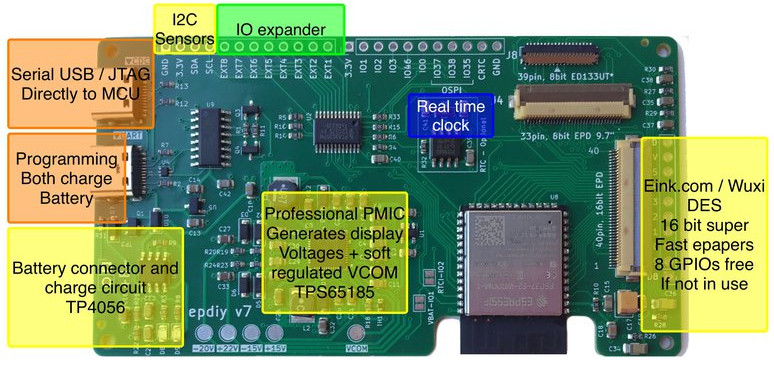

epdiy V7 S3 parallel epaper controller

I wanted to announce that the hardware for epdiy version 7, latest hardware for the project designed by Valentin Ronald, is ready to be tested. The original hardware you can find in the s3_lcd branch, this is my custom modified V7, that exposes 3 connectors: (Now already merged in main branch) I’ve been testing also…

-

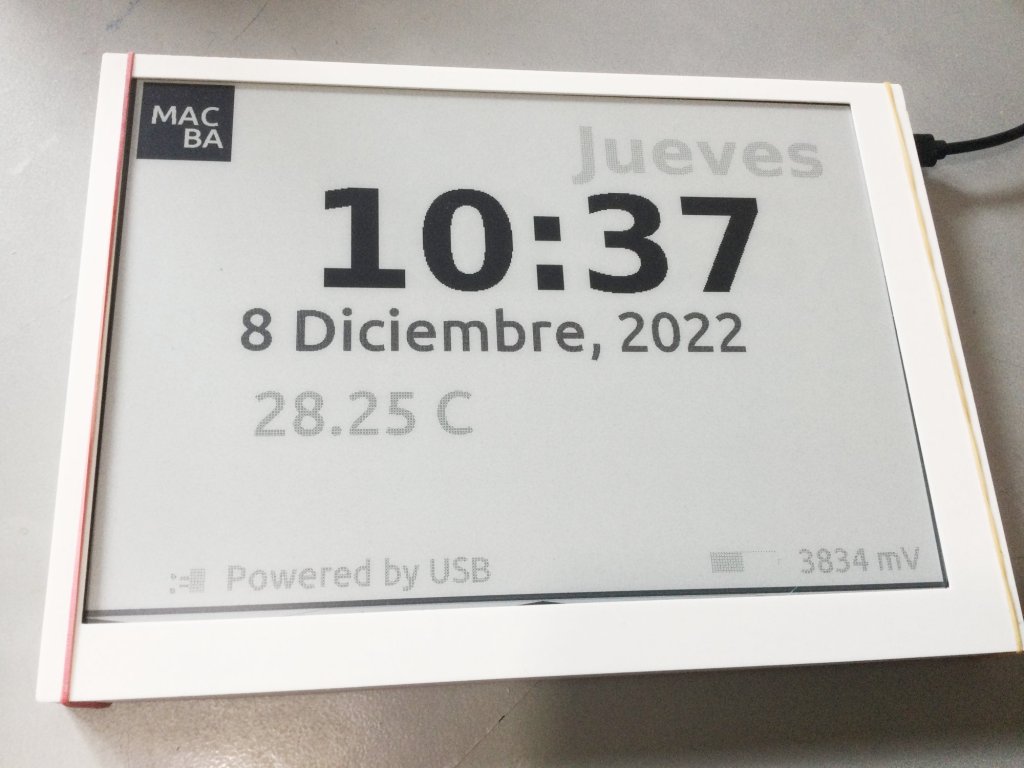

New cases for the 9.7″ parallel displays and proposal for CLB Club

This might be a continuation from the Cinwrite DEXA epaper controller that we designed just after partnering with Freddie from Good-Display.comThe idea is to prepare an open source Firmware that is ready to be used with different examples and two target controllers: 1 – DEXA-C097 from Good-Display needs additionally my Cinwrite ESP32-S3 SPI controller Optionally…

-

Testing touch on small SPI epaper displays

Our now IDF ver. 5 compatible touch component FT6X36 is ready to be tested. There are two affordable models that I would like to cover in this post. First one is the 400×300 FT6336 from Good Display, we can see this one in action (2nd video, 1st had still wrong Waveform) It’s important to know…

-

LVGL to design UX interfaces on epaper

lv_port_esp32-epaper is the latest successful attempt to design UX in C using Espressif ESP32.If you like the idea please hit the ★ in my repository fork.What is LVGL? LVGL stands for “Light and Versatile Graphics Library” and allows you to design an object oriented user interface in supported devices. So far it supports mostly TFT…

-

CALE.es hits first 100 users

After almost one year on the run, my service to deliver images for epapers and TFT displays finally is starting to get some adoption. The idea was starting at the beginning of 2020 when the epapers and many great projects like EPDiy in hackaday started to be early adopted. Our ESP32 Firmware does 3 things…

-

Repurposing the MIDI protocol to control Led Matrixes

Meet Remora-matrix a project that started with the idea to sniff MIDI messages and make simple visuals on a LED-Matrix. Our previous Firmware Remora was intended to receive short commands from ORCΛ and make very simple Neopixels animations on addressable LEDs stripes (WS2812B like)With this one I decided to go a step further and make…

-

Subscribe

Subscribed

Already have a WordPress.com account? Log in now.